“Hollywood is probably one of the hardest, if not the hardest place to bring voice into,” said Oz Krakowski, chief business development officer at Deepdub, an AI dubbing company.

Before any deal could be discussed, some clients sent questionnaires (“100 pages” long) scrutinizing Deepdub's models and the ethics behind them. Hollywood was slow to adopt generative AI, Krakowski said.

The reluctance had roots: Hollywood's caution toward generative AI deepened after the Writers Guild of America strike in 2023, which put the industry's relationship with AI technology under a microscope it has yet to put down.

AI that clears its throat

The resistance wasn't only about ethics. The technical bar for Hollywood was genuinely higher than most industries.

“Now, you can do conversational, you know, Amazon Polly … we look at them as older technologies, to generate voices. They still work, to some extent, in certain use cases,” Krakowski said, “but it won't work with television.”

Unlike corporate training videos or e-learning content, entertainment demanded something far harder to replicate. Krakowski described the problem as one of emotion. When humans speak in their native language, they do more than just enunciate. Their words have a specific lilt and tonality that is uniquely human.

AI doesn’t breathe, clear its throat, or make any of the other non-speech noises that listeners expect when humans talk. Addressing these issues required novel architectures, new algorithms, and bespoke training sets built from the ground up to produce human-like voices.

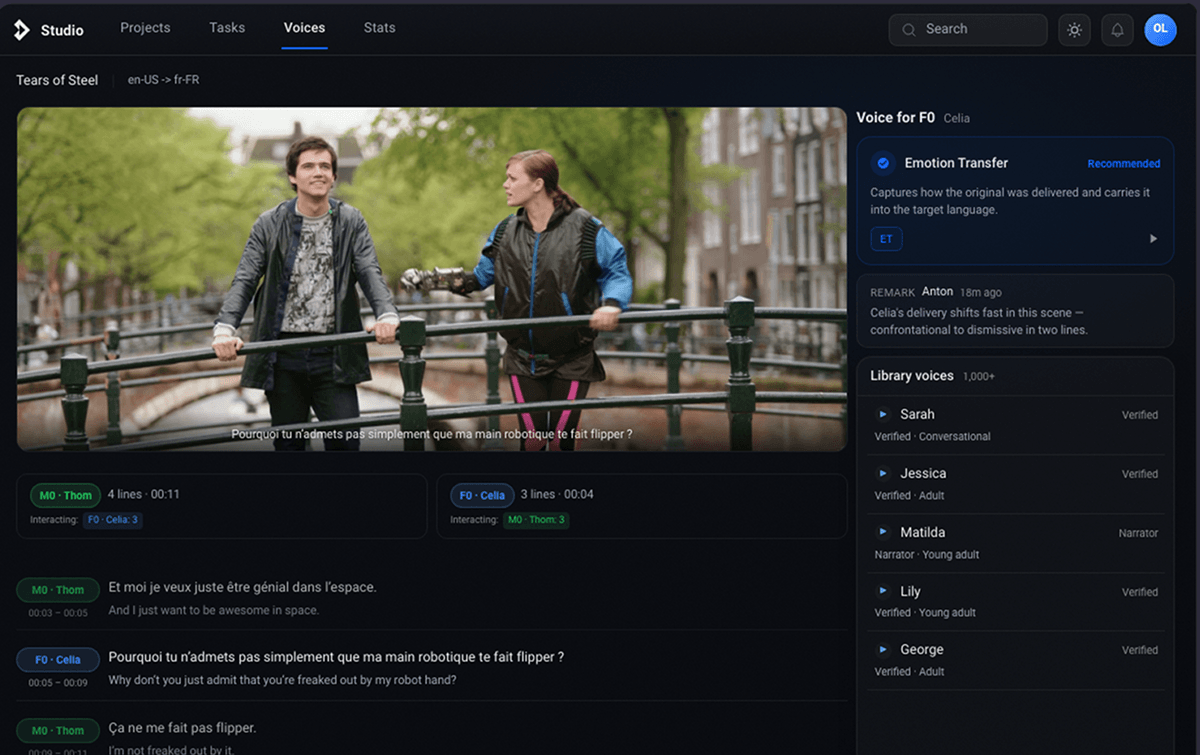

“The point is not to push a button and generate a movie”, said Anton Dvorkovich, CEO of Dubformer. Credits: Dubformer

In Deepdub’s case, it also took a lot of faith. Founded by brothers Ofir and Nir Krakowski, Deepdub spent more than five years developing its technology before receiving $3.5 million in seed funding in March 2025.

“We focused on emotions with a high level of focus on quality, on speed, and making it available. We spent about, I would say, two, three years, really figuring out the market, going through different phases with the evolution or the maturing of the market,” Krakowski said.

Push a button, make a movie?

Anton Dvorkovich, CEO and founder of Amsterdam-based Dubformer, started from a different place entirely. His firm's goal was to build tools for creators, not to replace them. “Ultimately,” Dvorkovich said, “the point is not to push a button and generate a movie but instead to enjoy human creativity.”

Dubformer’s technology focuses on localization tools that sound as natural as possible. This includes generating a voice that pronounces words correctly and exhibits the proper pacing and nuance as well as the other noises such as ambient reverb or studio echo that could apply to a particular scene.

Anton Dvorkovich, CEO and founder of Dubformer. Credits: Dubformer

The cost of half a year

The commercial stakes behind both approaches are significant. Expanding into new language markets through traditional dubbing is slow, expensive, and high-risk. “When you dub content,” Krakowski said, “specifically for media and entertainment, there's a lot of risk. Why? Because dubbing takes a lot of time, costs a lot of money and if you're going into a new region, there's a minimum amount of content that you have to dub. You don't even know if it's going to be successful. But you cannot come with one hour; with one episode.”

AI, in Krakowski's framing, was less a replacement for traditional dubbing than a way to compress timelines that once stretched to months without sacrificing quality. “It's going to take you half a year or four months to actually dub enough content to actually launch a channel or launch a new territory,” he said.

As both Krakowski and Dvorkovich said in separate interviews with The Infinite Loop, Hollywood and the traditional mainstream entertainment industry at large are typically reticent to integrate generative AI technology. And yet, even if Hollywood remains cautious, AI dubbing as a technology is clearly on the rise.

Voice AI industry leader ElevenLabs raised $500 million in February 2026 at a valuation of $11 billion, more than triple its 2024 figure. Smaller firms such as Linguana, Dubformer, and CAMB.AI have all seen upticks in funding over the past year, with 2026 reaching the highest industry adoption rates to date, according to data from Intel Market Research.

Given the current surge in both VC and industry interest through the first quarter of 2026, the general reluctance surrounding the adoption of AI dubbing technologies is beginning to crumble.

Analysts value the overall AI dubbing market at around $1.16 billion, with an expected average compound annual growth rate (CAGR) of 14.2% from 2026 to 2035. High adoption rates coupled with analysts predicting a near 15% CAGR for the whole market over the next decade indicate a bright future for firms currently positioned to scale voice solutions in the entertainment industry.