“Philosopher” is a worthy endeavor, but let’s be honest: it is not the profession in highest demand. And yet, it is in that capacity — and with that actual job title — that Henry Shevlin is joining Google DeepMind, the AI lab behind breakthroughs such as AlphaFold.

With research institutes proliferating, we already knew that AI was giving ethicists some food for thought, but seeing this turn into paychecks from the biggest players in the field is equally thought-provoking. As AI gets closer to the capabilities many of its creators have long imagined, philosophical questions around artificial intelligence are turning into operational decisions. Hiring Shevlin is one of the more visible signs the industry has taken notice.

However, the gap between academia and industry may not be that big for Shevlin, who plans to keep on conducting research and teaching at the University of Cambridge’s Leverhulme Centre for the Future of Intelligence, which has Google as one of its funders.

In a post announcing his move, the English researcher known for his work on non-human intelligence wrote that he expects to keep on working on “questions [he has spent his career] thinking about, now with the resources and urgency that come with being inside one of the world’s leading AI labs.”

Google DeepMind certainly doesn’t lack resources. As for the urgency Shevlin referred to, it comes from the very top, but not only.

In a recent live talk, Google DeepMind CEO Demis Hassabis discussed the timeline for artificial general intelligence (AGI) — AI that is generalist enough to match or surpass humans across all sorts of cognitive tasks. The very notion is disputed, but Hassabis framed his comment as “when”, not “if” — and predicted AGI could be achieved around 2030.

Credit: Google DeepMind

The timeline is contested. But If AGI is genuinely only a few years away, the question of what it should be allowed to do, how it should be evaluated and what humans owe it — or it owes us — stops being only academic.

AGI readiness

Not coincidentally, “AGI readiness” is one of the topics that Shevlin will reflect on. The urgency of his reflection, however, may come as much from how people are willing to believe in “machine consciousness” and to form “human-AI relationships,” the other two topics he mentioned as part of his mandate.

How humans have responded to AI that is still far from AGI is also what has led tech companies to take measures. This is particularly important for a company like Google DeepMind, which states that its mission is “to build AI responsibly to benefit humanity.” Despite the limitations of current AI models, they have already been accused of inducing psychosis, or at least amplifying it. And having an AI girlfriend or boyfriend is no longer science fiction.

Making sure that AI is a net positive may require more than philosophers, and Google DeepMind knows it. A job posting revealed that its Mountain View office is seeking to hire a senior psychologist, whose responsibilities within its Ethics Foresight team will include identifying and addressing “potential ethical and well-being implications” of its AI.

Know your meme

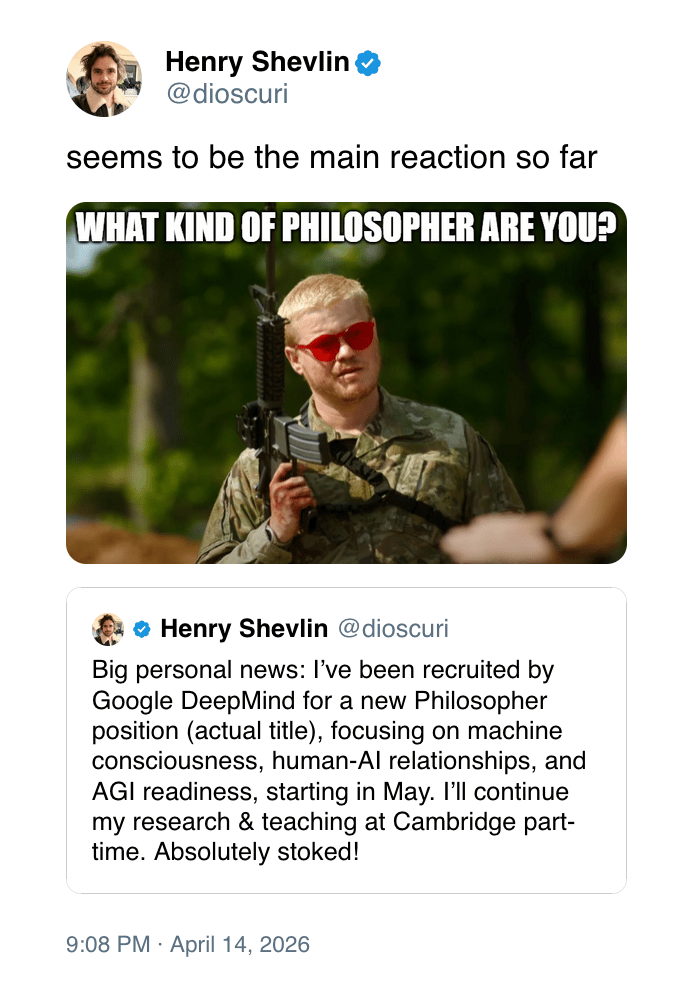

Shevlin’s role will necessarily be less hands-on, but he is not the kind of academic who is living in an ivory tower. A highly ranked AI ethicist whose often-cited work includes a paper on the risks and benefits of social AI, he is also a self-confessed longtime Redditor, and he knows his memes.

When X users made lighthearted jokes about his “big personal news,” he summed up their reactions with the "What Kind Of X Are You?" meme, changing the caption to "What Kind Of Philosopher Are You?"

There is no settled definition of what an AI philosopher is supposed to do, partly because the discipline is being redrawn in real time as the systems it studies become more capable than its frameworks anticipated.

AI’s capacity to surprise us is not theoretical. In 2024, Demis Hassabis and Google DeepMind director John Jumper won the Nobel Prize in Chemistry for AlphaFold.

Now used by millions of researchers, this program to predict protein structure quite clearly benefits humanity, which makes Google DeepMind a good fit for a self-described “congenital AI optimist” like Shevlin.

“I know that I know nothing”

Machine consciousness may be Shevlin’s most combustible topic. “This is one debate that seems already to be quite polarizing,” Shevlin said in a recent podcast interview. “It's one of the rare occasions I've had students walk out of my classes — when the issues of robot rights come up. Some people find it genuinely offensive to even speculate about whether AI systems are conscious.”

The other side of the debate is that “a significant number of users may really mean it when they attribute mental states to AI systems,” Shevlin observed in a recent paper. Rather than dismiss them, Shevlin expects an active debate.

This open-mindedness may be the best explanation as to why Google DeepMind hired him, although it will be interesting to see what kind of deliverables the company expects from a genuine philosopher. In response to a tweet stating that “no decent philosopher believes ‘machine consciousness’ is (or ever will be) a thing,” Shevlin replied with the famous Ancient Greek phrase “ἓν οἶδα ὅτι οὐδὲν οἶδα” — “I know that I know nothing.”